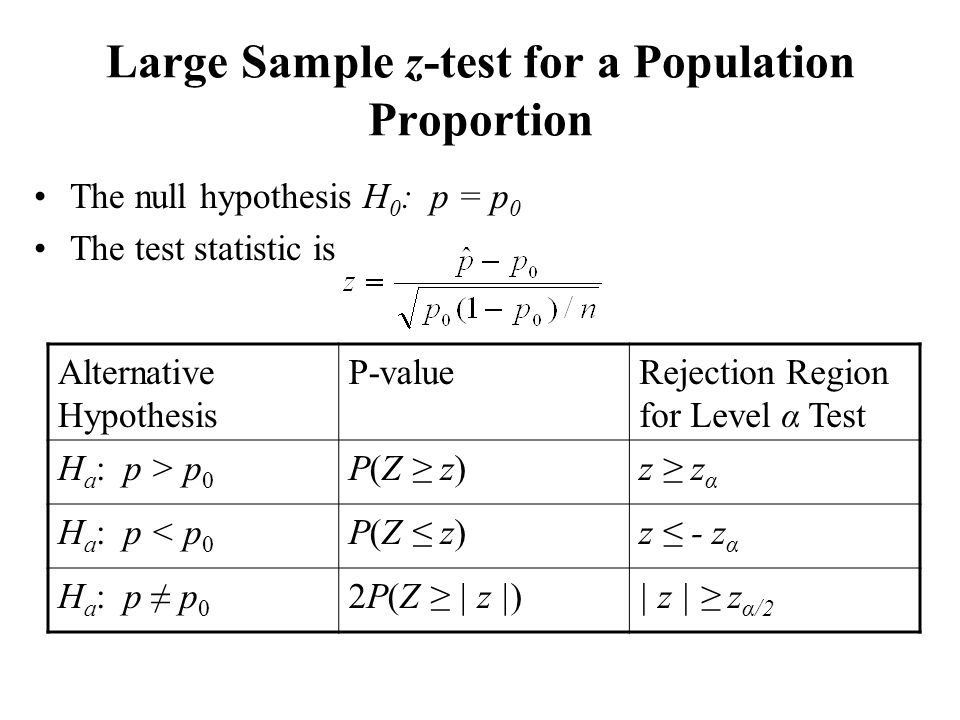

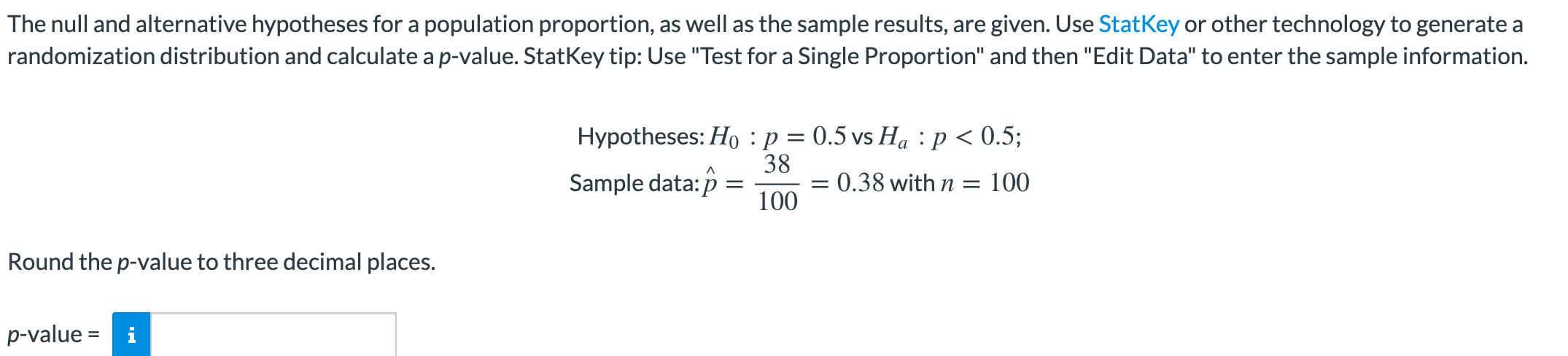

P-value ≥ 0.1: weak to no evidence supporting the alternative hypothesis.0.05 ≤ P-value 0.01 ≤ P-value P-value The smaller the P-value, the stronger the evidence supporting the alternative hypothesis. Since the P-value represents the probability of observing our result or more extreme, the smaller the P-value, the more unusual our observation was. The reason why we do multiply by two is that even though the result was on one side, we didn't know before collecting the data, on which side it would be. It may seem odd to multiply the probability by two, since "or more extreme" seems to imply the area in the tail only. In a two-tailed test, the P-value = 2P(Z > |z o|). (You should also include a measure of the strength of the results, based on the P-value.) Calculating P-Values Right-Tailed Tests Step 5 : Reject the null hypothesis if the P-value is less than the level of significance, α. ( α will often be given as part of a test or homework question, but this will not be the case in the outside world.) Step 2 : Decide on a level of significance, α, depending on the seriousness of making a Type I error. As usual, the following two conditions must be true:

In this first section, we assume we are testing some claim about the population proportion. Testing Claims Regarding the Population Proportion Using P-Values

So what we do is create a test statistic based on our sample, and then use a table or technology to find the probability of what we observed.

So is observing 74% of our sample unusual? How do we know - we need the distribution of ! You might recall that based on data from, 68.5% of ECC students in general are par-time. Why are these important? Well, suppose we take a sample of 100 online students, and find that 74 of them are part-time.

Population proportion hypothesis test calculator free#

If you're interested in learning any of these other methods,įeel free to read through the textbook. Your textbook references three different methods for testing hypotheses:īecause P-values are so much more widely used, we willīe focusing on this method. In other words, the observed results are so unusual, that our original assumption in the null hypothesis must not have been correct. If the observed results are unlikely assuming that the null hypothesis is true, we say the result is statistically significant, and we reject the null hypothesis. Once we have our null and alternative hypotheses chosen, and our sample data collected, how do we choose whether or not to reject the null hypothesis? In a nutshell, it's this: